By Alex Li

On June 7, IETF contributor Robin Marx announced on Twitter:" After five years of work, HTTP/3 was finally standardized as RFC 9114!"

Source: Twitter

This is a big moment for Internet.

As the latest HTTP version, HTTP/3 will bring significant opportunities and challenges.

To get a better understanding of this newly published standard, we invited Robin Marx to join our interview and let him give us a detailed explanation.

Photo provided by Robin Marx

In 2015, Robin started researching HTTP/2 performance as part of his PhD,which gave him an opportunity to contribute to HTTP/3 and QUIC in IETF. While working on these protocols,Robin created QUIC and HTTP/3 debugging tools (called qlog and qvis) that have benefited many engineers all around the world.

In this email interview, Robin talks about the advantages brought by HTTP/3 and QUIC, the challenges people were facing when designing HTTP/3, HTTP/3 adoption issue, and the development of future internet, etc..

Robin also tells us how he started the protocol journey and his expectation for his new role at Akamai.

The following is our conversation with Robin Marx.

LiveVideoStack: Hi, Robin, thank you so much for being here with us. Before we start, could you give us a brief introduction of yourself?

Robin Marx: Happy to be here! I got involved with network protocols when I started researching HTTP/2 performance in 2015 as part of my PhD. This later gave me the unique opportunity to contribute to HTTP/3 and QUIC in the IETF (Internet Engineering Task Force) while they were being designed. I specifically made a series of debugging and testing tools (called qlog and qvis) that helped battle-test implementations and uncovered many bugs and problems in the specs. This all has culminated in getting a job as Solutions Architect/Web Performance Expert at the CDN company Akamai, which I’ll start in August.

LiveVideoStack: Compared to HTTP/2, what are the major improvements in HTTP/3?

Pic provided by Robin

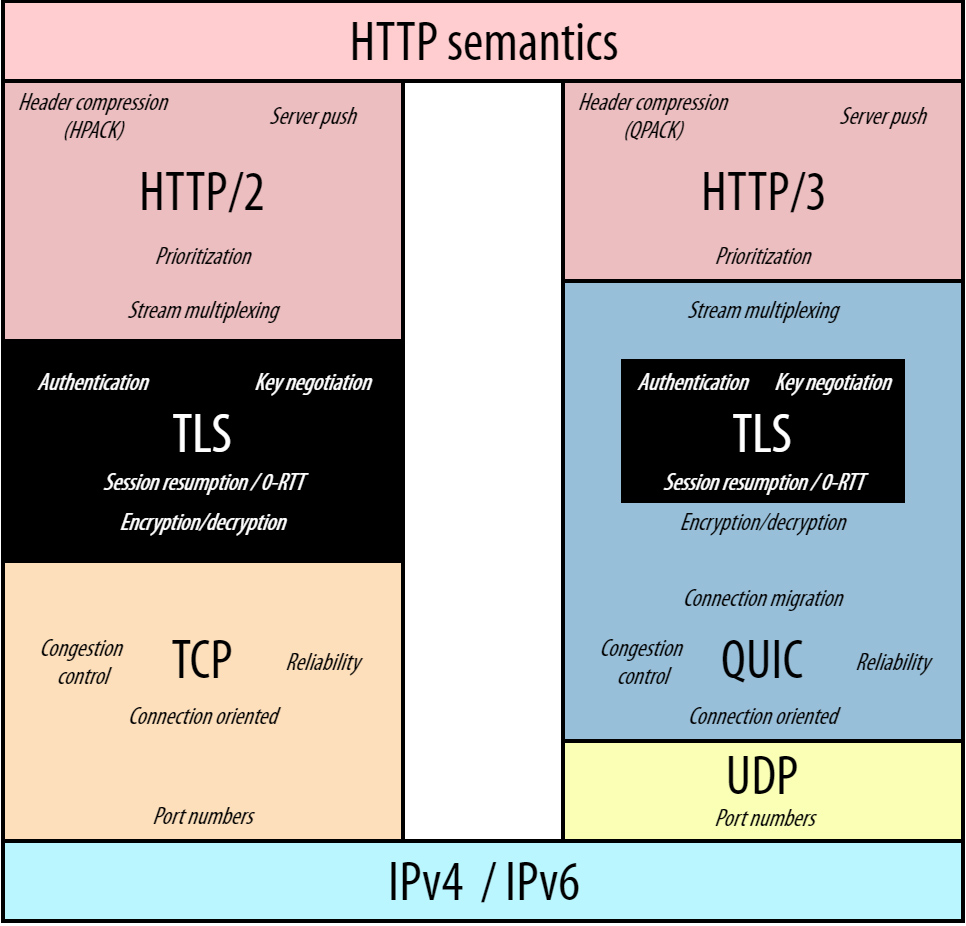

Robin Marx: High-level, HTTP/3 as a protocol by itself is actually very similar to HTTP/2. It offers the same high-level features like header compression, server push (though that’s still not recommended for common use) and stream prioritization (though in a simpler, easier to use way). The main thing that has changed is what protocols are being used “underneath” HTTP: this was TCP+TLS for HTTP/2, while for HTTP/3 it has changed to QUIC, a new transport protocol that deeply integrates with TLS. QUIC and its usage of TLS provide many benefits for HTTP/3 compared to HTTP/2, mainly in terms of (Web page loading) performance.

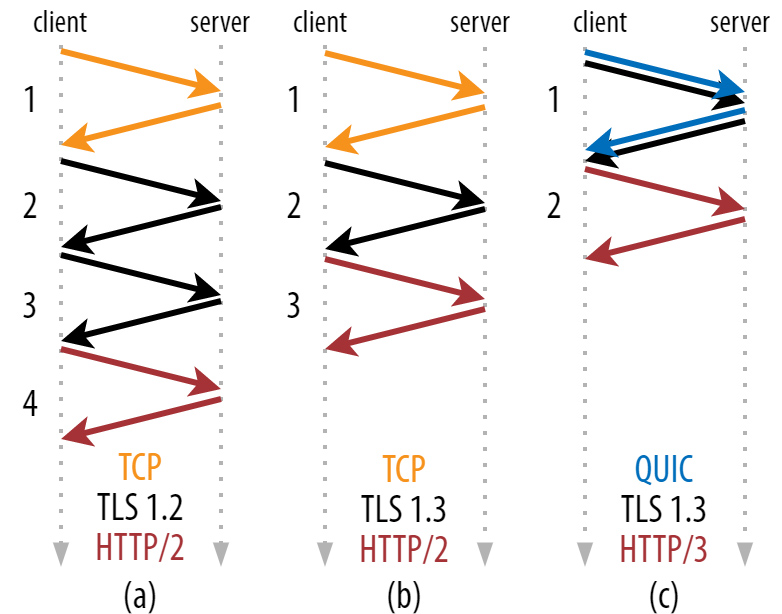

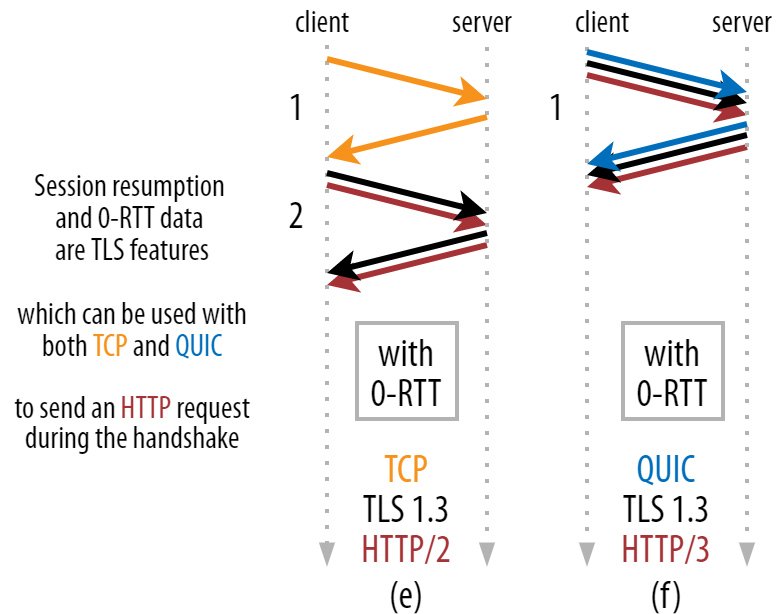

Firstly, QUIC has a faster connection setup, as it can combine the transport-level handshake (TCP’s SYN+SYN/ACK+ACK bootstrap) and the cryptographic handshake (TLS’setup) in a single Round Trip (which takes 2 to 3 Round Trips for HTTP/2). Furthermore, HTTP/3 can profit from TLS 1.3’s 0-RTT feature, meaning an HTTP request can be sent, and a (partial) response can be received during the very first handshake! Especially in cases where client and server are geographically distant, this can help considerably.

Pics provided by Robin

Secondly, QUIC takes the concept of multiple individual bytestreams from HTTP/2 and brings it down to the transport protocol level. This is a bit technical to understand, but the end result is that it improves QUIC’s (and thus HTTP/3’s) resilience to packet loss and reordering. This solves a long-standing issue with TCP called the “Head-of-Line blocking”problem, in which a single lost packet on an HTTP/2 connection could block all data after it while waiting for a retransmission. In some cases, HTTP/3 can unblock some of that data to process and render or execute it earlier.

Thirdly, QUIC has a smattering of other performance benefits. For example, there is the new “connection migration” feature that will make switching between networks (for example, moving from Wi-Fi to 4G) more seamless (though few deployments currently support it). QUIC is also smarter about how it acknowledges received packets, making it easier to decide how/when to retransmit lost packets and to tune congestion control logic. Finally, QUIC also allows both reliable and unreliable data in the same connection. This isn’t terribly useful for HTTP/3 by itself, but it will allow other interesting protocols such as WebTransport or MASQUE proxying to run over QUIC.

In conclusion: HTTP/3 primarily benefits from the major new QUIC protocol, and will continue to do so as new optimizations and features are added to QUIC down the road.

LiveVideoStack: Do you have any advice for people who are going to configure this new protocol?

Robin Marx: HTTP/3 and QUIC are notoriously complex, due to their advanced features and some nuanced security characteristics inherent to things like 0-RTT and load balancing. At this point, I strongly recommend not doing it yourself and choosing an existing deployment at one of the big Content Delivery Networks (CDNs) such as Akamai, Cloudflare or Fastly. Soon, you’ll also be able to use HTTP/3 out-of-the-box with Amazon Web Services and Azure, while it should already be available for Google’s hosting options.

LiveVideoStack: Will HTTP/3 have an impact on applications building on top of it? What changes would be made to the applications?

Robin Marx: Since HTTP/3 is so similar to HTTP/2, few if any changes are needed to websites to run properly over HTTP/3. This is quite different from when we moved from HTTP/1.1 to HTTP/2, which often required much bigger changes.

For applications then (think native apps for example), you will usually need to use a completely different software library for HTTP/3, which will also typically include an implementation of QUIC. I would recommend Cloudflare’s quiche, ngtcp2 or quickly, all open source and available on GitHub. In some rare cases, you can use a built-in platform implementation: iOS/OSX exposes HTTP/3 and QUIC via its networking services (see https://developer.apple.com/videos/play/wwdc2021/10094/) and .NET has an initial implementation in its latest version as well.

Using these implementations shouldn’t be too difficult if you just want to send/receive some data over HTTP/3 or QUIC. It gets more difficult when you really want to fine-tune your application to make optimal use of advanced features like 0-RTT or connection migration. For that, you really need to understand how they work, what their limitations are, and how to properly trigger them from the implementation’s API. Prepare for a few days of deep diving into source code ;)

LiveVideoStack: What does QUIC mean for other use cases, such as video streaming? How does it compare to WebRTC? And what about the new WebTransport?

Robin Marx: QUIC being a general purpose transport protocol indeed allows us to use it for more use cases than just Web page loading. Video streaming, both live and on-demand, is being heavily considered in a special “Media over QUIC” working group at the IETF. It is however still somewhat unclear how this can/should/will be done in the best way possible, since some existing offerings in this space (for example WebRTC) are already quite powerful and widely deployed, and it’s unclear if/how QUIC would provide much benefits. Additionally, it’s also quite difficult to just run existing protocols on the new QUIC logic, and they often require some changes. It’s still very early days, but the academic community has shown some interesting concepts, such as combining both reliable (for key frames) and unreliable (for delta-compressed frames) video data in a single QUIC connection. I don’t think we’ll see WebRTC-over-QUIC, but some future replacement is definitely in the cards.

WebTransport is another interesting direction, intended to expose a broader set of networking features to Web developers. While we’re currently somewhat limited to dry HTTP (via fetch()) or high-level streaming (with WebSockets), WebTransport will allow a more low-level access to network features. We still won’t get access to raw UDP sockets, but WebTransport at least will allow for more optimized implementations of multiplayer games or, indeed, custom media streaming logic.

LiveVideoStack: You’ve been creating debugging tools for QUIC and HTTP/3 protocols, and could you tell us a bit more about these tools? What benefits can they bring to the engineers?

Robin Marx: My main tools are in a collection called qvis, available at

https://qvis.quictools.info/. These are a collection of visualizations that show

advanced features like congestion control, stream multiplexing and concrete

packetization across the protocol layers. I’ve found that for these complex

features, established tools like Wireshark are simply not powerful enough to

provide fast and actionable insight for protocol implementers and people doing low-level tuning. For general users though, I’d expect they’ll never even need Wireshark, so qvis might be a bridge too far for them as well.

Another important note is that qvis utilizes a data logging format specific to QUIC and HTTP/3 called qlog (https://github.com/quicwg/qlog), instead of standard packet captures (.pcap files). This for two main reasons: 1. QUIC is deeply encrypted at the transport level, so a lot of information like packet numbers are no longer visible without decryption (which is very different from TCP). 2. Packet captures do not include advanced information such as the current congestion control window, estimated round-trip-time, why a packet was marked as lost, etc. By having the endpoints (client and server) log this deep information directly, we can create much more powerful tools that help debug implementation behavior, and also prevent having to decrypt packet captures in deployment scenarios (as this would not just provide access to the QUIC packet metadata, but also the user’s personal data like passwords). qlog thus provides a privacy-sensitive and more powerful alternative to in-network packet captures. It is currently being standardized by the IETF as well.

LiveVideoStack: What are the biggest challenges you people are facing when designing HTTP/3? How did you overcome them?

Robin Marx: Originally, the intent was to run HTTP/2 over QUIC with only minor changes. However, when trying that, we quickly found that HTTP/2 made a few too many assumptions on how TCP works that are changed in QUIC. Main among them is the Head-of-Line Blocking problem; while this leads to inefficiencies, it also guarantees that all data arrives in the exact order it is sent. In QUIC however, this guarantee no longer exists: if the sender sends packets A and B, the second packet B might very well be delivered to the receiver application before A. This messes with so many of HTTP/2’s assumptions, that we had to redesign many of its underlying mechanisms to provide the same high-level features in HTTP/3. Two systems that are almost completely overhauled are the QPACK header compression setup and the prioritization system. Figuring out how to approach the latter was actually a major part of my PhD research.

For QUIC, many more design challenges had to be tackled. This often took quite some time and many discussions of highly talented engineers from many of the larger Internet companies like Google, Facebook, Microsoft and Mozilla. One goal for example was to make the protocol overhead (the amount of bytes used for protocol metadata instead of actual user data) as small as possible. This was achieved with many different clever compression tricks in several places, from the “Variable-Length Integer Encoding” to how acknowledgements are sent and how QUIC typically encodes a 64-bit packet number into just a single byte for most packets. Another thing that comes to mind is that QUIC sort of encrypts each packet twice: once for the payload, and once for the packet header. This was mainly needed to add support for updating encryption keys during the connection’s lifetime (important for long-running conversations) and took quite some time to figure out.

LiveVideoStack: While HTTP/3 adoption is growing, there are still major challenges to address. Could you talk a little bit about it?

Robin Marx: As I said before, HTTP/3 and QUIC are notoriously complex to setup and configure correctly and securely. We have several of the big companies that have put significant effort into doing this and who are now offering this as an easy-to-use commercial service. However, having smaller companies or individuals do this themselves will take considerably longer to be feasible, at least if you want to use all the advanced features such as 0-RTT, connection migration or robust load balancing.

Additionally, many network administrators are still hesitant to allow QUIC on their networks. This for two reasons.

Firstly, QUIC is deployed on top of UDP, mainly to make it easier to deploy on the Internet (most middleboxes already know of UDP, but wouldn’t know what to do with QUIC running directly on top of the IP protocol). UDP has historically however often been used for all kinds of (Denial-of-Service) attacks and many networks only allow it for a select few use cases (such as DNS). While QUIC integrates mitigations for most of the known UDP attacks (such as built-in amplification attack limits), most network admins might still be hesitant to just allow it without proper firewall support. Firewall vendors however have been very slow to provide this due to the protocol complexity, and because most end user software like browsers automatically falls back to HTTP/2 if QUIC is blocked.

Secondly, QUIC encrypts so much of the packet metadata, that it becomes difficult to be impossible to provide TCP-esque firewalling or network health tracking services (say tracking round trip times or packet loss statistics) for QUIC traffic. As such, network owners will have much less control over which types of connections are allowed, and much less options to steer this traffic without several additional steps (for example, the qlog project can help here, but requires interactions with the internal server endpoints to extract data, which is not needed for TCP). This increased complexity will again be a barrier for broader deployment in the short to middle term.

LiveVideoStack: As you can see, will QUIC finally replace TCP or just improve it?

Robin Marx: I don’t think QUIC will ever replace TCP 100%. You will always have people choosing for TCP’s simpler setup or running legacy applications that cannot be updated easily. One example that comes to mind is Netflix, who have invested a tremendous amount of money to optimize their TCP+TLS+HTTP/2 stack for video streaming directly from the Linux kernel. They are unlikely to switch to HTTP/3 soon. I do feel that over time most applications will switch to QUIC, and that new application-level protocols will be designed for use with QUIC only, discarding TCP.

LiveVideoStack: How do you see the future evolution of internet protocols? What do you think the internet will be like in the future?

Robin Marx: One of the main reasons to encrypt QUIC so heavily is to prevent it from becoming stale and difficult to evolve. This is one of the key problems with TCP; it is deployed so widely and implemented in so many different devices, that changing anything about the core protocol requires updating most of those devices, which can take literally over a decade.

With QUIC, the hope is that only the clients and servers will have to be updated to add new features, while other appliances like firewalls and load balancers can remain stable (at least after adding initial QUIC support). Whether or not this will actually happen is not entirely sure to me (you can still do TLS interception/proxying with QUIC after all), but I do feel it’s a major step in the right direction.

I think we will probably use QUIC and HTTP/3 (or at least their evolved versions) for some decades before we again decide we need something new to deal with the new opportunities of quantum networks and interplanetary communications ;)

LiveVideoStack:Robin, you’ve been working on HTTP/2, HTTP/3 and QUIC for many years. How did you get involved in it?

Robin Marx: My original work started with HTTP/2, and that was mainly an already existing research project that I got hired for. This was in 2015, when the HTTP/2 protocol was freshly standardized by the IETF and starting to be deployed widely.

My research was about how much HTTP/2 could improve performance, as at that time people were claiming very impressive gains of up to 50% over HTTP/1.1. Even my early testing however showed that such gains almost never happened; in fact, HTTP/2 was quite often even a bit slower. This led me to discover and publish some problems and bugs in popular browser implementations, which gave our team some “street cred” in the community.

Then, around 2017, I was debating whether to stick with HTTP/2 (which probably wouldn’t have too many more interesting discoveries) or switch to something completely different. Just at that time however, QUIC implementation work was starting in earnest at the IETF. I assigned one of my master students the task of trying to create a simple initial QUIC implementation, to see if this would be interesting. That turned out to be a much more difficult task than expected, and I had to join in to make it work. In that process, we had contact with engineers in the IETF that were also working on QUIC implementations via GitHub.

It quickly became obvious that we were not the only ones struggling to implement QUIC (and later HTTP/3) and to verify if everything worked correctly. This was especially because the protocols kept changing in very big ways in those days, often requiring us to rewrite large parts of our codebases. As such, I started working on some tools and visualizations to help us debug and verify our own implementation’s behavior.

This approach worked so well for us that we decided to share it with other implementers as well. While we didn’t expect there to be much interest (these other implementers were from companies like Facebook and Cloudflare after all), the opposite was true. Many stacks decided to implement our proposed qlog format and started using our qvis tools to help debug their systems. Over time, we extended these tools and helped find many implementation bugs and inefficiencies, which we were then also able to publish in academic venues so I could finish my PhD :)

Long story short, I think we saw a need for a concrete problem to be solved and were able to do this due to deep involvement with the IETF community.

LiveVideoStack:Could you offer some useful advice to people who are starting to work on protocols?

Robin Marx:I would strongly recommend reaching out to the people that participate in the IETF (via mailing lists, GitHub repositories, or by (remotely) attending IETF meetings). These are almost all engineers at the larger companies, so they are very technical and down to earth. They are also surprisingly friendly and welcoming of new people, willing to answer even basic questions with a smile.

You should be prepared to make it a commitment however… Protocol work can get deeply complex and you will need some time to really get up to speed with the IETF’s way of working and its history if you want to really have an impact.

LiveVideoStack:You said on twitter that you are going to work as a Web Performance Expert at Akamai, and what are you expecting from this new role?

Robin Marx: Since beginning my PhD research, I have wanted to work for a CDN company such as Akamai. CDNs are some of the most interesting setups to work with, due to their inherently global nature and heavy focus on both performance and security. They have to be at the cutting edge of for example new protocols to remain competitive and as such also need experts to help not only internal teams make sense of new technologies, but also outside stakeholders like their customers.

At Akamai, I will mainly help provide that bridge between customers and internal expertise, by explaining the inner workings of protocols and other Web (performance) related technologies in an approachable manner. This helps translate from the customers’ business needs to how our technology can help achieve this.

This will be a somewhat less technical role than I’m used to, but it will focus on some of my other strengths that I’ve been able to develop during my PhD, such as technical writing and public speaking. I’m very much looking forward to it :)

LiveVideoStack:Last but not least, if you are given a chance to have a conversation with an internet pioneer, who do you want to talk to most? What would you like to talk about?

Robin Marx: I would love to have a discussion with Van Jacobson and wish I could still have a chat with Sally Floyd (sadly she passed away some years ago). These were some of the people that pioneered work around congestion control algorithms.

The more I learn about protocols and network performance, the more it becomes clear how crucial the underlying congestion control mechanisms are (both built-in to protocols like TCP and QUIC, but also in networks themselves with things like Active Queue Management).

While congestion control algorithms by themselves are often not that difficult to understand conceptually, really and deeply knowing how they practically work and behave in a real, worldwide and modern network is something else entirely. I would love to get some insights from the very early days and from the people who have seen this evolve over time, to help me understand some of the finer points.

For example, I was told by some Google engineers that tooling similar to my qvis visualizations already existed internally in the company for some time, and that in some aspects they were quite a bit better than my versions. They were implemented by…Van Jacobson himself :) I’d like to ask him how to improve my work and how to, maybe, eventually surpass his someday.

To learn more about HTTP/3, you can read Robin’s articles at smashingmagazine.com: https://www.smashingmagazine.com/author/robin-marx/

At last, we'd like to say thank you to leeoxiang、Li Chao、Sid Wang who have contributed to this interview.